Single concatenated input is better than indenpendent multiple-Input for cnns to predict chemical - induced disease relation from literature

Chemical compounds (drugs) and diseases are among top searched keywords on the

PubMed database of biomedical literature by biomedical researchers all over the world (according

to a study in 2009). Working with PubMed is essential for researchers to get insights into drugs’

side effects (chemical-induced disease relations (CDR), which is essential for drug safety and

toxicity. It is, however, a catastrophic burden for them as PubMed is a huge database of

unstructured texts, growing steadily very fast (~28 millions scientific articles currently,

approximately two deposited per minute). As a result, biomedical text mining has been empirically

demonstrated its great implications in biomedical research communities. Biomedical text has its

own distinct challenging properties, attracting much attetion from natural language processing

communities. A large-scale study recently in 2018 showed that incorporating information into

indenpendent multiple-input layers outperforms concatenating them into a single input layer (for

biLSTM), producing better performance when compared to state-of-the-art CDR classifying

models. This paper demonstrates that for a CNN it is vice-versa, in which concatenation is better

for CDR classification. To this end, we develop a CNN based model with multiple input

concatenated for CDR classification. Experimental results on the benchmark dataset demonstrate

its outperformance over other recent state-of-the-art CDR classification models.

Trang 1

Trang 2

Trang 3

Trang 4

Trang 5

Trang 6

Tóm tắt nội dung tài liệu: Single concatenated input is better than indenpendent multiple-Input for cnns to predict chemical - induced disease relation from literature

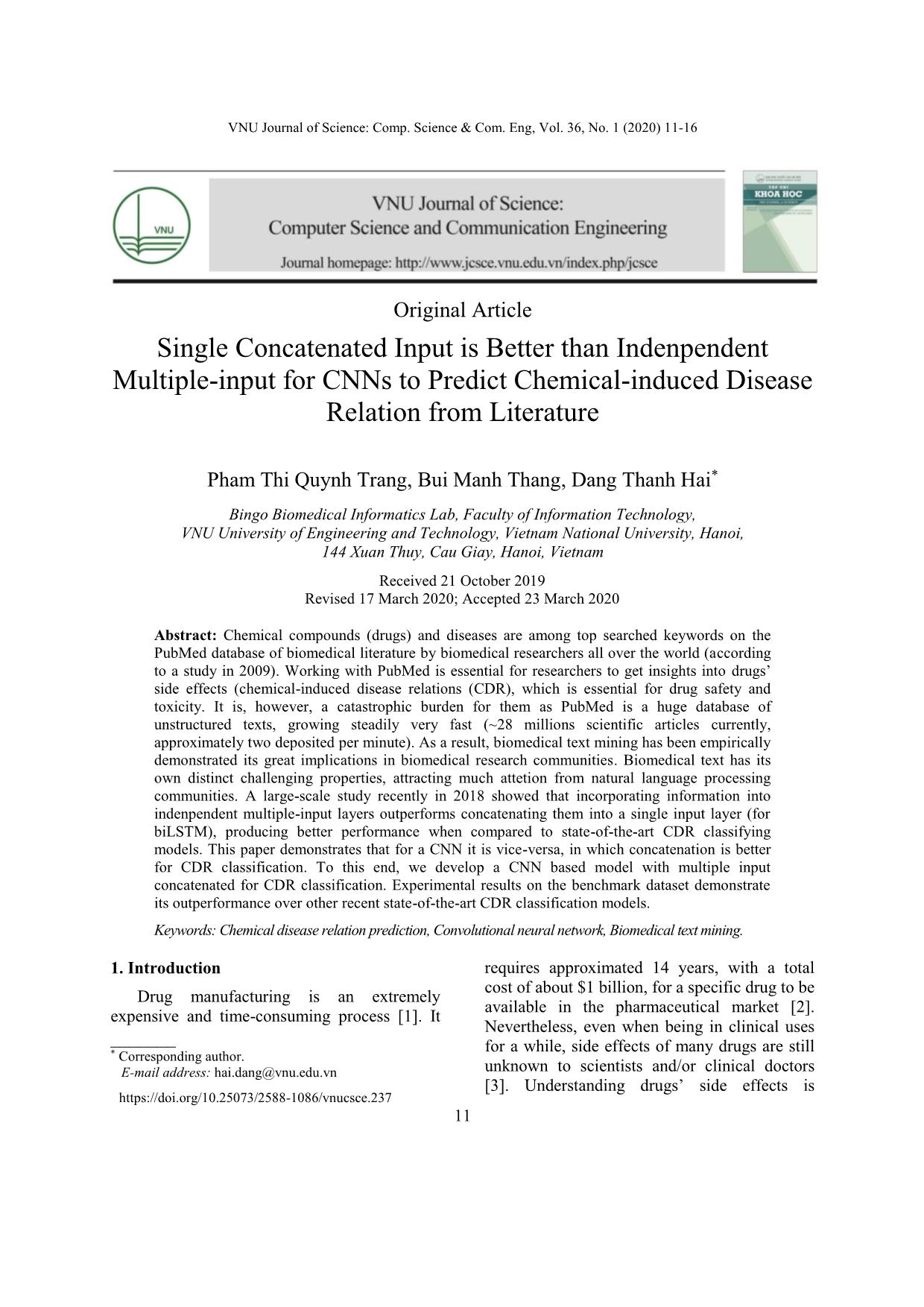

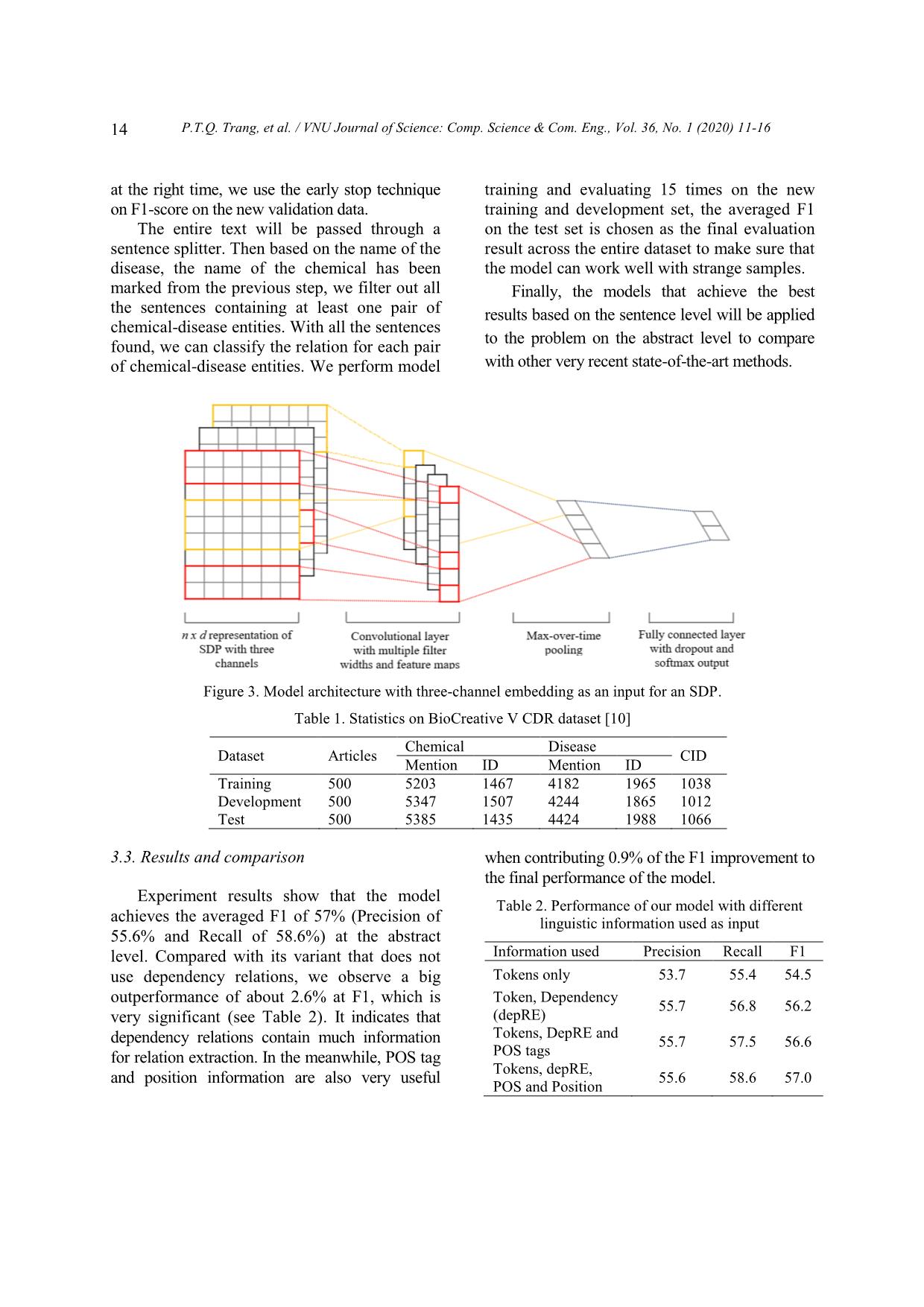

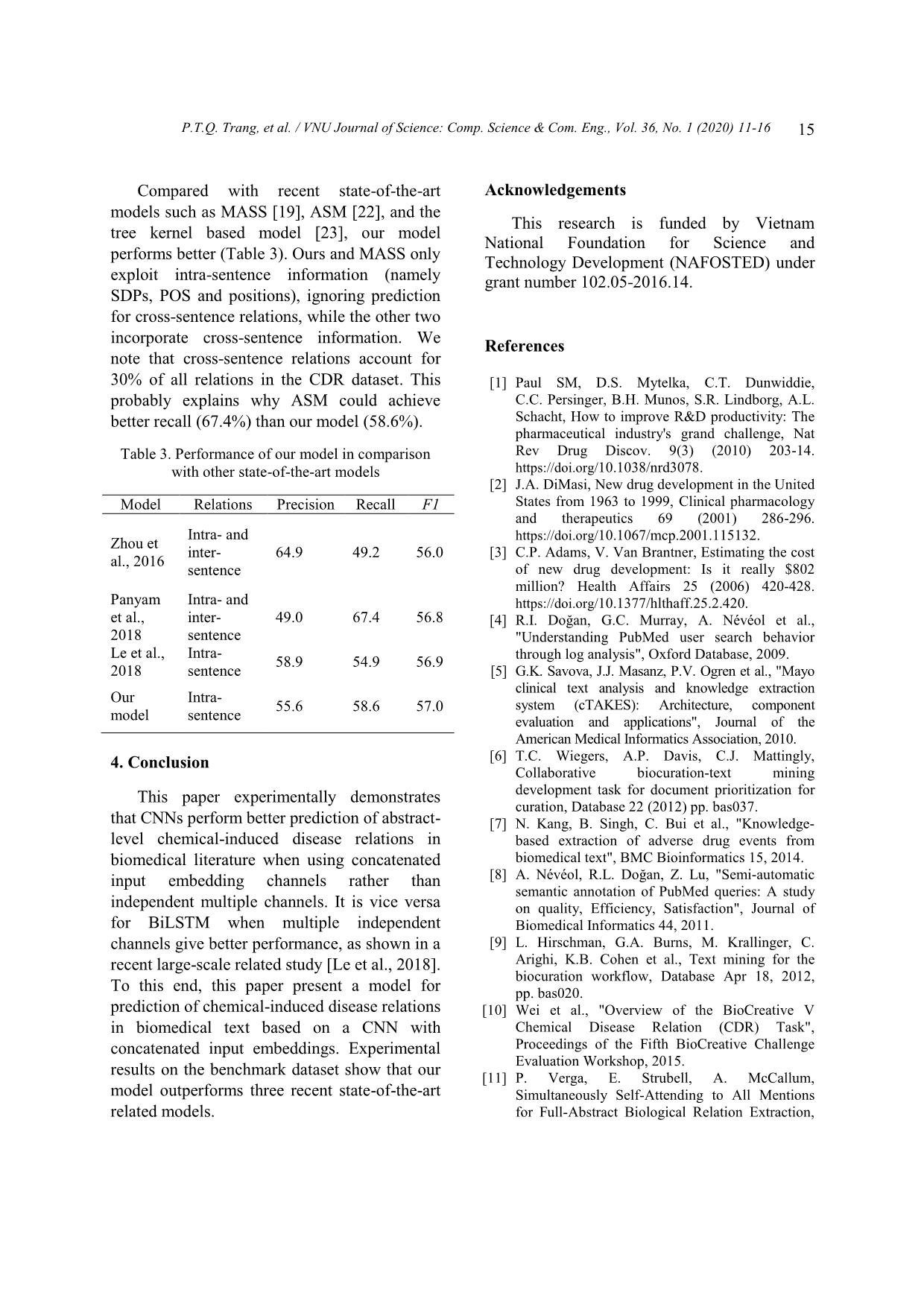

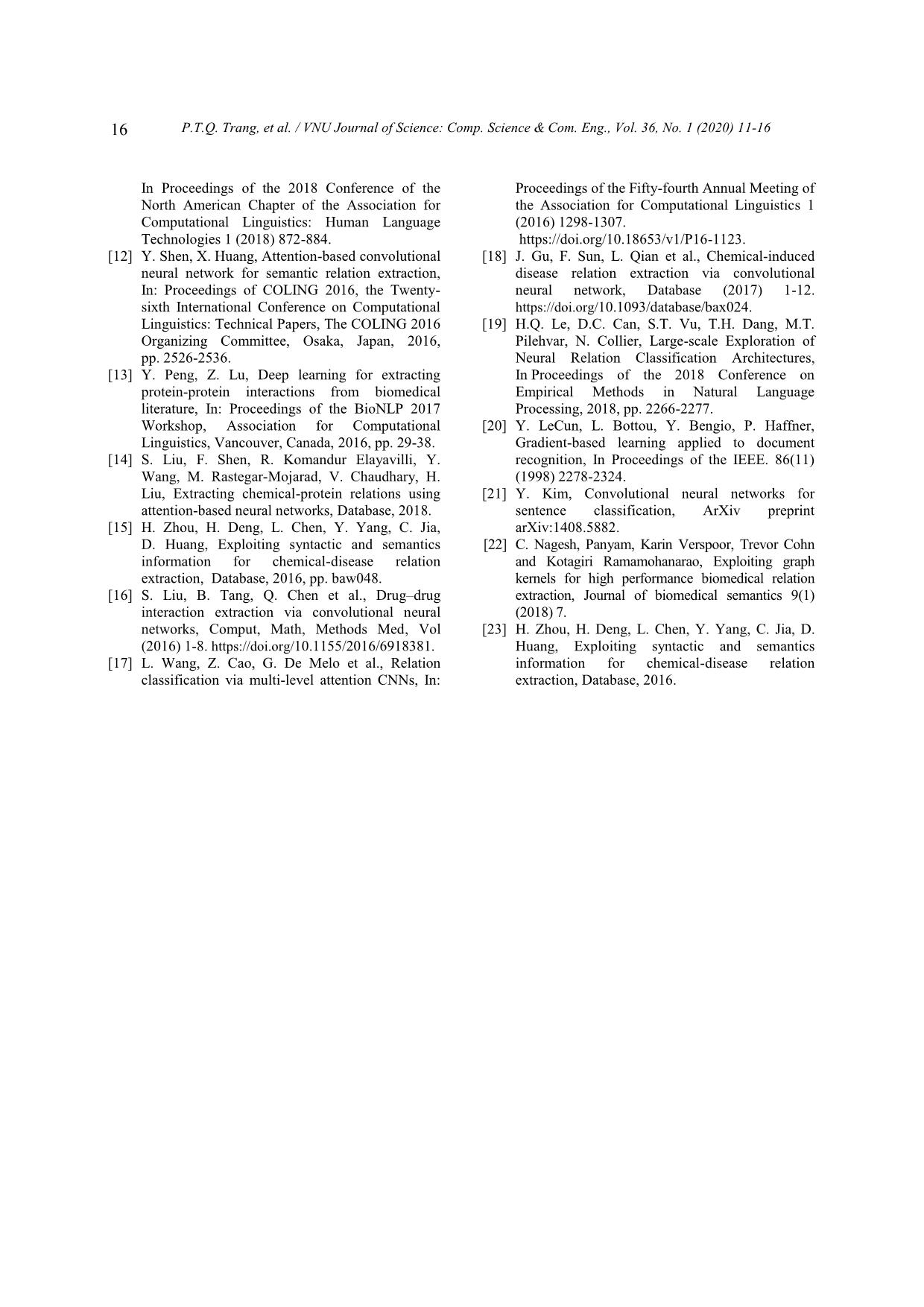

researchers. The rest of this paper is organized as It can be, however, accelerated with the follows. Section 2 describes the proposed application of biomedical text mining, hereby method in detail. Experimental results are for drug (chemical) - disease relation discussed in section 3. Finally, section 4 prediction, in particular. Biomedical text concludes this paper. mining has been empirically demonstrated its great implications in biomedical research communities [5-7]. 2. Method Biomedical text has its own distinct challenging properties, attracting much attetion Given a preprocessed and tokenized from natural language processing communities sentence containing two entity types of interest [8, 9]. In 2004, an annual challenge, called (i.e. chemical and disease), our model first BioCreative (Critical Assessment of extracts the shortest dependency path (SDP) (on Information Extraction systems in Biology) was the dependency tree) between such two entities. launched for biomedical text mining The SDP contains tokens (together with researchers. In 2016, researchers from NCBI dependency relations between them) that are organized the chemical disease relationship important for understanding the semantic extraction task for the challenge [10]. connection between two entities (see Figure 1 for To date, almost all proposed models are only an example of the SDP). for prediction of relationships between chemicals and diseases that appear within a sentence (intra- sentence relationships) [11]. We note that those models that produce the state-of-the-art performance are mainly based on deep neural architechtures [12-14], such as recurrent neural networks (RNN) like bi-directional long short- Figure 1. Dependency tree for an example sentence. term memory (biLSTM) in [15] and convolutional The shortest dependency path between two entities neural networks (CNN) in [16-18]. (i.e. depression and methyldopa) goes through the Recently, Le et al. developed a biLSTM tokens “occurring” and “patients”. based intra-sentence biomedical relation prediction model that incorporates various Each token t on a SDP is encoded with the informative linguistic properties in an embedding et by concatenating three w pt independent multiple-layer manner [19]. Their embeddings of equal dimension d (i.e. e ⨁ e ⨁ experimental results demonstrate that eps), which represent important linguistic incorporating information into independent information, including its token itself (ew), part multiple-input layers outperforms concatenating of speech (POS) (ept) and its position (eps). Two them into a single input layer (for biLSTM), former partial embeddings are fine-tuned during P.T.Q. Trang, et al. / VNU Journal of Science: Comp. Science & Com. Eng., Vol. 36, No. 1 (2020) 11-16 13 the model training. Position embeddings are embedding channel (c) independently, creating l r indexed by distance pairs [d %5, d %5], where a corresponding feature map ℱic. The max dl and dr are distances from a token to the left pooling operator is then applied on those and the right entity, respectively. created feature maps on all channels (three in For each dependency relation (r) on the our case) to create a feature value for filter fi SDP, its embedding has the dimension of 3*d, (Figure 3). and is randomly initialized and fine-tuned as the 2.2. Hyper-parameters model’s parameters during training. To this end, each SDP is embedded into the The model’s hyper-parameters are RNxD space (see Figure 2), where N is the empirically set as follows: number of all tokens and dependency relations ● Filter size: n x d, where d is the embedding on the SDP and D=3*d. The embedded SDP dimension (300 in our experiments), n is a number will be fed as input into a conventional of consecutive elements (tokens/POS tags, convolutional neural network (CNN [20]) for relations) on SDPs (Figure 3). being classified if there is or not a predefined ● Number of filters: 32 filters of the size 2 x relation (i.e. chemical-induced disease relation) 300, 128 of 3 x 300, 32 of 4 x 300, 96 of 5 x 300. between two entities. ● Number of hidden layers: 2. ● Number of units at each layer: 128. - The number of training epochs: 100 - Patience for early stopping: 10 - Optimizer: Adam 3. Experimental results 3.1. Dataset Our experiments are conducted on the Bio Creative V data [10]. It’s an annotated text corpus that consists of human annotations for Figure 2. Embedding by concatenation mechanism chemicals, diseases and their chemical-induced- of the Shortest Dependency Path (SDP) from the disease (CID) relation at the abstract level. The example in Figure 1. dataset contains 1500 PubMed articles divided 2.1. Multiple-channel embedding into three subsets for training, development and testing. In 1500 articles, most were selected For multi-channel embedding, instead of from the CTD data set (accounting for concatenating three partial embeddings of each 1400/1500 articles). The remaining 100 articles token on a SDP we maintain three independent in the test set are completely different articles, embedding channels for them. Channels for which are carefully selected. All these data is relations on the SDP are identical embeddings. manually curated. The detail information is nxdxc As a result, SDPs are embedded into R , shown in Table 1. where n is the number of all tokens and dependency relations between them, d is the 3.2. Model evaluation dimension number of embeddings, and c=3 is We merge the training and development the number of embedding channels. subsets of the BioCreative V CDR into a single To calculate feature maps for CNN we training dataset, which is then divided into the follow the scheme in the work of Kim 2014 new training and validation/development data [21]. Each CNN’s filter fi is slided along each with a ratio 85%:15%. To stop training process 14 P.T.Q. Trang, et al. / VNU Journal of Science: Comp. Science & Com. Eng., Vol. 36, No. 1 (2020) 11-16 at the right time, we use the early stop technique training and evaluating 15 times on the new on F1-score on the new validation data. training and development set, the averaged F1 The entire text will be passed through a on the test set is chosen as the final evaluation sentence splitter. Then based on the name of the result across the entire dataset to make sure that disease, the name of the chemical has been the model can work well with strange samples. marked from the previous step, we filter out all Finally, the models that achieve the best the sentences containing at least one pair of results based on the sentence level will be applied chemical-disease entities. With all the sentences to the problem on the abstract level to compare found, we can classify the relation for each pair of chemical-disease entities. We perform model with other very recent state-of-the-art methods. U Ơ Figure 3. Model architecture with three-channel embedding as an input for an SDP. Table 1. Statistics on BioCreative V CDR dataset [10] Chemical Disease Dataset Articles CID Mention ID Mention ID Training 500 5203 1467 4182 1965 1038 Development 500 5347 1507 4244 1865 1012 Test 500 5385 1435 4424 1988 1066 g 3.3. Results and comparison when contributing 0.9% of the F1 improvement to the final performance of the model. Experiment results show that the model Table 2. Performance of our model with different achieves the averaged F1 of 57% (Precision of linguistic information used as input 55.6% and Recall of 58.6%) at the abstract level. Compared with its variant that does not Information used Precision Recall F1 use dependency relations, we observe a big Tokens only 53.7 55.4 54.5 outperformance of about 2.6% at F1, which is Token, Dependency 55.7 56.8 56.2 very significant (see Table 2). It indicates that (depRE) Tokens, DepRE and dependency relations contain much information 55.7 57.5 56.6 for relation extraction. In the meanwhile, POS tag POS tags Tokens, depRE, and position information are also very useful 55.6 58.6 57.0 POS and Position P.T.Q. Trang, et al. / VNU Journal of Science: Comp. Science & Com. Eng., Vol. 36, No. 1 (2020) 11-16 15 Compared with recent state-of-the-art Acknowledgements models such as MASS [19], ASM [22], and the This research is funded by Vietnam tree kernel based model [23], our model National Foundation for Science and performs better (Table 3). Ours and MASS only Technology Development (NAFOSTED) under exploit intra-sentence information (namely grant number 102.05-2016.14. SDPs, POS and positions), ignoring prediction for cross-sentence relations, while the other two incorporate cross-sentence information. We References note that cross-sentence relations account for 30% of all relations in the CDR dataset. This [1] Paul SM, D.S. Mytelka, C.T. Dunwiddie, probably explains why ASM could achieve C.C. Persinger, B.H. Munos, S.R. Lindborg, A.L. better recall (67.4%) than our model (58.6%). Schacht, How to improve R&D productivity: The pharmaceutical industry's grand challenge, Nat Table 3. Performance of our model in comparison Rev Drug Discov. 9(3) (2010) 203-14. with other state-of-the-art models https://doi.org/10.1038/nrd3078. [2] J.A. DiMasi, New drug development in the United Model Relations Precision Recall F1 States from 1963 to 1999, Clinical pharmacology and therapeutics 69 (2001) 286-296. Intra- and https://doi.org/10.1067/mcp.2001.115132. Zhou et inter- 64.9 49.2 56.0 [3] C.P. Adams, V. Van Brantner, Estimating the cost al., 2016 sentence of new drug development: Is it really $802 million? Health Affairs 25 (2006) 420-428. Panyam Intra- and https://doi.org/10.1377/hlthaff.25.2.420. et al., inter- 49.0 67.4 56.8 [4] R.I. Doğan, G.C. Murray, A. Névéol et al., 2018 sentence "Understanding PubMed user search behavior Le et al., Intra- through log analysis", Oxford Database, 2009. 58.9 54.9 56.9 2018 sentence [5] G.K. Savova, J.J. Masanz, P.V. Ogren et al., "Mayo clinical text analysis and knowledge extraction Our Intra- 55.6 58.6 57.0 system (cTAKES): Architecture, component model sentence evaluation and applications", Journal of the American Medical Informatics Association, 2010. 4. Conclusion [6] T.C. Wiegers, A.P. Davis, C.J. Mattingly, Collaborative biocuration-text mining This paper experimentally demonstrates development task for document prioritization for curation, Database 22 (2012) pp. bas037. that CNNs perform better prediction of abstract- [7] N. Kang, B. Singh, C. Bui et al., "Knowledge- level chemical-induced disease relations in based extraction of adverse drug events from biomedical literature when using concatenated biomedical text", BMC Bioinformatics 15, 2014. input embedding channels rather than [8] A. Névéol, R.L. Doğan, Z. Lu, "Semi-automatic semantic annotation of PubMed queries: A study independent multiple channels. It is vice versa on quality, Efficiency, Satisfaction", Journal of for BiLSTM when multiple independent Biomedical Informatics 44, 2011. channels give better performance, as shown in a [9] L. Hirschman, G.A. Burns, M. Krallinger, C. recent large-scale related study [Le et al., 2018]. Arighi, K.B. Cohen et al., Text mining for the biocuration workflow, Database Apr 18, 2012, To this end, this paper present a model for pp. bas020. prediction of chemical-induced disease relations [10] Wei et al., "Overview of the BioCreative V in biomedical text based on a CNN with Chemical Disease Relation (CDR) Task", concatenated input embeddings. Experimental Proceedings of the Fifth BioCreative Challenge Evaluation Workshop, 2015. results on the benchmark dataset show that our [11] P. Verga, E. Strubell, A. McCallum, model outperforms three recent state-of-the-art Simultaneously Self-Attending to All Mentions related models. for Full-Abstract Biological Relation Extraction, 16 P.T.Q. Trang, et al. / VNU Journal of Science: Comp. Science & Com. Eng., Vol. 36, No. 1 (2020) 11-16 In Proceedings of the 2018 Conference of the Proceedings of the Fifty-fourth Annual Meeting of North American Chapter of the Association for the Association for Computational Linguistics 1 Computational Linguistics: Human Language (2016) 1298-1307. Technologies 1 (2018) 872-884. https://doi.org/10.18653/v1/P16-1123. [12] Y. Shen, X. Huang, Attention-based convolutional [18] J. Gu, F. Sun, L. Qian et al., Chemical-induced neural network for semantic relation extraction, disease relation extraction via convolutional In: Proceedings of COLING 2016, the Twenty- neural network, Database (2017) 1-12. sixth International Conference on Computational https://doi.org/10.1093/database/bax024. Linguistics: Technical Papers, The COLING 2016 [19] H.Q. Le, D.C. Can, S.T. Vu, T.H. Dang, M.T. Organizing Committee, Osaka, Japan, 2016, Pilehvar, N. Collier, Large-scale Exploration of pp. 2526-2536. Neural Relation Classification Architectures, [13] Y. Peng, Z. Lu, Deep learning for extracting In Proceedings of the 2018 Conference on protein-protein interactions from biomedical Empirical Methods in Natural Language literature, In: Proceedings of the BioNLP 2017 Processing, 2018, pp. 2266-2277. Workshop, Association for Computational [20] Y. LeCun, L. Bottou, Y. Bengio, P. Haffner, Linguistics, Vancouver, Canada, 2016, pp. 29-38. Gradient-based learning applied to document [14] S. Liu, F. Shen, R. Komandur Elayavilli, Y. recognition, In Proceedings of the IEEE. 86(11) Wang, M. Rastegar-Mojarad, V. Chaudhary, H. (1998) 2278-2324. Liu, Extracting chemical-protein relations using [21] Y. Kim, Convolutional neural networks for attention-based neural networks, Database, 2018. sentence classification, ArXiv preprint [15] H. Zhou, H. Deng, L. Chen, Y. Yang, C. Jia, arXiv:1408.5882. D. Huang, Exploiting syntactic and semantics [22] C. Nagesh, Panyam, Karin Verspoor, Trevor Cohn information for chemical-disease relation and Kotagiri Ramamohanarao, Exploiting graph extraction, Database, 2016, pp. baw048. kernels for high performance biomedical relation [16] S. Liu, B. Tang, Q. Chen et al., Drug–drug extraction, Journal of biomedical semantics 9(1) interaction extraction via convolutional neural (2018) 7. networks, Comput, Math, Methods Med, Vol [23] H. Zhou, H. Deng, L. Chen, Y. Yang, C. Jia, D. (2016) 1-8. https://doi.org/10.1155/2016/6918381. Huang, Exploiting syntactic and semantics [17] L. Wang, Z. Cao, G. De Melo et al., Relation information for chemical-disease relation classification via multi-level attention CNNs, In: extraction, Database, 2016. Uu u

File đính kèm:

single_concatenated_input_is_better_than_indenpendent_multip.pdf

single_concatenated_input_is_better_than_indenpendent_multip.pdf